contemporary performance optimization in golf demands a rigorous, quantitative foundation that links observable motion to underlying force production and neural control. This article synthesizes kinematic, kinetic, and neuromuscular perspectives into a coherent analytical framework for golf swing mechanics, with the objective of identifying measurable determinants of performance and providing practitioners-researchers, coaches, and clinicians-with evidence‑based pathways to technique refinement. By integrating high‑resolution motion capture, force measurement, electromyography, and computational modeling, the framework aims to move beyond descriptive accounts toward mechanistic inference and reproducible intervention design.Central to the proposed framework are (1) standardized definitions and metrics for key swing phases and outputs (e.g., pelvis‑torso coordination, X‑factor dynamics, clubhead speed, attack angle, and ball launch conditions), (2) validated measurement protocols that prioritize reliability and ecological validity, and (3) multiscale models that couple segmental dynamics with neuromuscular activation patterns to explain how technique adaptations alter performance and injury risk. Emphasis is placed on robust data processing, sensitivity analysis, and hypothesis‑driven use of statistical and machine learning tools to extract actionable insights while avoiding overfitting to idiosyncratic datasets.

The advancement and application of such a framework benefit from principles established in other analytical disciplines-principles including methodological rigor, interdisciplinary training, and careful attention to sample planning and measurement validity as exemplified in contemporary analytical chemistry literature (see e.g., ACS Analytical Chemistry discussions of methodological development and validation) [1-4]. Translating these principles to sport biomechanics entails clear reporting standards, calibration procedures, and cross‑laboratory benchmarking so that interventions informed by the framework can be reliably implemented and assessed across populations and settings.the framework advocates a translational pipeline linking laboratory findings to on‑course performance: mechanistic modeling should inform targeted coaching cues and training interventions, which are than evaluated through randomized or cohort studies that measure both technical change and performance outcomes. Through this integrative,evidence‑oriented approach,the field can better quantify what matters in the modern golf swing and provide practitioners with defensible strategies for performance enhancement and injury mitigation.

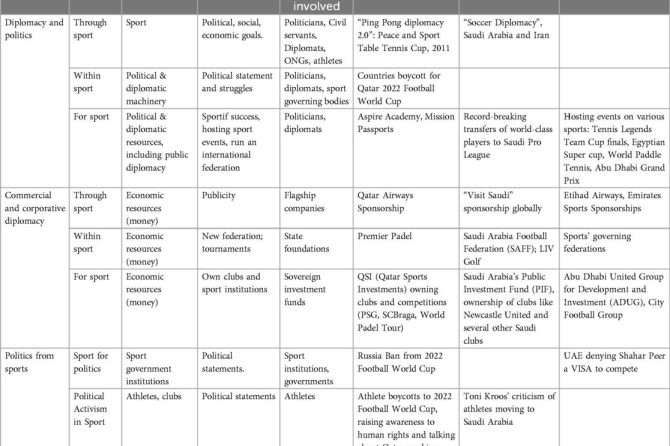

Kinematic Modeling of the Golf swing: Quantifying Joint Angles, Angular Velocities, and sequence Optimization for Improved Efficiency

Kinematic modeling frames the golf swing as a multi-segment, articulated motion problem in which joint angles, segment orientations, and relative angular velocities are the primary observables. In contrast to dynamic formulations that explicitly model forces and torques, the kinematic approach isolates the geometry and timing of motion-position, velocity, and acceleration-allowing precise quantification of motion without requiring direct measurement of applied forces. common parameterizations include Cardan/Euler angles, helical axes, and unit quaternions to avoid singularities; consistent anatomical coordinate frames and clearly defined joint center estimations are essential for reproducible results. High-fidelity models typically extract: **joint angular displacement**, **instantaneous angular velocity**, and **angular acceleration** for each relevant degree of freedom (hips, pelvis, thorax, shoulders, elbows, wrists, and club shaft).

Quantification pipelines rely on marker-based motion capture or inertial-sensor arrays combined with inverse kinematics and robust filtering.Typical preprocessing steps are:

- Marker/sensor registration to anatomical frames;

- Inverse kinematics to estimate joint angles from segment poses;

- Smoothing and differentiation (e.g., low-pass Butterworth then central-difference) to compute angular velocities/accelerations;

- Event detection (backswing start, transition, impact, follow-through) to normalize time-series for ensemble analysis.

These steps yield time-normalized kinematic signatures that support inter-subject comparison and intra-subject progress tracking.

optimization of the swing sequence focuses on the timing and ordering of peak angular velocities-commonly a **proximal-to-distal** transfer where hips and torso reach peak velocity prior to upper arm, forearm, and wrist release. Sequence metrics include **time-to-peak**, peak-magnitude ratios (e.g., torso-to-hip velocity ratio), and phase lags between adjacent segments. Analytical tools used for optimization include cross-correlation and transfer-function analysis to quantify coupling, dimensionality reduction (PCA/SVD) to identify dominant coordination modes, and constrained nonlinear optimization (e.g., minimize variance in impact angle subject to a target clubhead speed). These methods allow explicit trade-offs between efficiency (energy transfer) and robustness (tolerance to perturbations).

Practical implementation requires attention to sampling rate, filtering, and model granularity: recommended sampling ≥ 200 Hz for optical capture of high-speed wrist/club motions, and inertial systems with equivalent bandwidth when used in the field. Typical preprocessing parameters and targets are summarized below for reproducibility and translation into coaching feedback. Beyond analysis, kinematic outcomes feed real-time biofeedback systems that can cue athletes on phase timing or on deviation from an optimized temporal template-thereby closing the loop between measurement and motor learning.

| Signal | Recommended | Purpose |

|---|---|---|

| Sampling rate | ≥ 200 Hz | Capture wrist/club dynamics |

| Low-pass filter | 12-20 Hz (optical) | Remove high-frequency noise before differentiation |

| Time-to-peak window | Normalized 0-100% swing | Compare sequencing across trials |

Kinetic Analysis and Ground Reaction Force Strategies: Translating force Profiles into Power Generating recommendations

Kinetic inquiry reframes the swing as a transient force-generation problem: rather than simply observing kinematics, we quantify how net external and internal forces produce clubhead energy. In biomechanical terms this aligns with classical kinetics (the study of forces and their effect on motion), which emphasizes impulse, peak force, and rate-of-force-development as the proximate determinants of launch energy. Translating force profiles into coaching prescriptions requires mapping multi-axis ground reaction force (GRF) signatures to sequential joint torques and inter-segmental power flows so that targeted interventions address the true mechanical bottleneck instead of superficial swing characteristics.

Analytical practise begins with standardized,repeatable metrics extracted from force plates and synchronized motion capture. Key measurable targets include:

- Peak vertical GRF (multiples of body weight) – reflects load acceptance and energy storage in lower limbs;

- Horizontal (medial-lateral and anterior-posterior) impulses – indicate push-off timing and lateral weight transfer;

- Rate of force development (RFD) – correlates with the temporal bandwidth available for power transfer to the torso and arms;

- center-of-pressure (CoP) progression – identifies how plantar loading patterns sequence proximal-to-distal power generation.

These metrics permit objective comparison across swings and provide the quantitative basis for specific strength, sequencing, and mobility recommendations.

| metric | Typical Target (Driver) | Interpretive Action |

|---|---|---|

| Peak vertical GRF | 2.2-2.8 × BW | Emphasize eccentric-concentric leg drive drills |

| Anterior-posterior impulse | >20% total impulse forward by impact | Train timed lateral push-off and hip extension |

| RFD to peak | <200 ms (from initiation) | Power-focused plyometrics and ballistic squats |

Operationalizing these results requires a structured, evidence-based feedback loop: capture high-fidelity GRF and kinematic data, extract the above metrics, prescribe a constrained set of mechanical drills, and re-test under matched conditions. Recommended interventions include targeted plyometrics to raise RFD, resisted lateral band work to improve horizontal impulse, and tempo-specific swing drills that re-time CoP progression. Implement with progressive loading,integrate wearable GRF proxies for on-course monitoring,and include injury-risk checks (hip and knee valgus,lumbar shear) so that force-maximization strategies remain within safe biomechanical boundaries.

Integrating Inertial Measurement Units and Optical Motion Capture: Best Practices for Data Fidelity and Markerless Alternatives

Combining body-worn inertial sensors with camera-based tracking leverages complementary strengths: **IMUs** provide continuous, orientation-rich data with resilience to occlusion and on-course portability, while optical systems deliver high-spatial-accuracy global pose and kinematic segment lengths. Achieving coherent fusion requires explicit agreement of coordinate frames, consistent anthropometric scaling, and robust time synchronization. implement a two-stage calibration that frist aligns sensor-to-segment transforms on a neutral pose and then refines offsets using dynamic calibration trials; this reduces soft-tissue and attachment-frame mismatch that otherwise produce systematic bias in joint angles and club-head kinematics.

Maintain data fidelity through reproducible processing pipelines and the following operational best practices:

- Clock synchronization: use hardware triggers or network time protocol with timestamp correction to limit temporal misalignment to <5 ms.

- Sampling parity: match or upsample/downsample streams and apply anti-aliasing filters to prevent interpolation artifacts.

- cross-calibration: perform simultaneous static and dynamic trials to estimate sensor-to-optical transform matrices.

- Filtering & sensor fusion: use stable algorithms (e.g., EKF/UKF or constrained smoothing) with biomechanics-informed priors to constrain improbable rotations and reduce drift.

- Attachment protocol: document mounting locations, orientation markers, and fixation methods to minimize soft-tissue artifacts and to ensure repeatability across sessions and subjects.

Where markerless solutions are desirable (field use, minimal setup), integrate them as a complementary modality rather than a standalone replacement for rigorous biomechanics assessment. Modern pose-estimation networks (transfer-learning models adapted for golf posture) can reconstruct global skeletons but typically suffer from scale ambiguity and occasional joint misidentification during high-speed motion. Fusing markerless 2D/3D estimates with IMU-derived segment orientations addresses scale and drift: force IMU-derived segment rotation priors into the pose optimization step, or use IMUs to provide continuous rotational constraints while the camera stream supplies global translation and limb-length regularization. For data fusion, employ a probabilistic framework that weights modalities by context-dependent confidence (e.g., increase IMU weight during occlusion frames).

Quality assurance should be quantitative and repeatable. Use standardized error metrics (RMSE for joint angles, absolute positional error for club and COM, and latency measurements) and report confidence intervals across repeated swings. Typical benchmark targets for integrated systems are shown below; adapt thresholds to study aims and participant level.

| Measure | Optical (typical) | IMU-fused (typical) |

|---|---|---|

| Joint-angle RMSE | 1-3° | 2-5° |

| Positional error (club head) | 1-10 mm | 10-25 mm |

| Latency | <1-10 ms | 5-30 ms |

When reporting results, include repeatability (ICC), sensitivity analyses to sensor placement, and a validation trial against a gold-standard optical baseline where feasible. This obvious evaluation enables evidence-based decisions about trade-offs between portability, cost, and kinematic fidelity for applied golf-swing analysis.

statistical and Machine Learning Frameworks for Movement Pattern Classification: From Cluster Analysis to Predictive Performance Models with Practical Prescriptions

Contemporary analyses of golf swing mechanics synthesize both **statistical** and **machine learning** paradigms to transform raw kinematic traces into actionable movement classes and performance forecasts. Drawing on the broad notion of a “machine” as a device that executes tasks-whether mechanical, electrical, or computational-the term here extends to algorithmic systems that extract regularities from multivariate time series. The methodological pipeline typically moves from hypothesis‑driven statistical descriptions (e.g., variance partitioning, mixed models) to data‑driven pattern revelation, enabling a principled transition from population‑level inference to individualized predictive models.

Unsupervised learning and exploratory statistics serve as the first stage for movement pattern discovery. Common approaches and practical prescriptions include:

- Cluster analysis: K‑means,Gaussian mixture models,and hierarchical clustering reveal latent swing archetypes; use silhouette scores and stability analysis to select cluster counts.

- Dimensionality reduction: PCA, t‑SNE, and UMAP condense correlated joint trajectories into interpretable components for downstream modeling.

- Time‑series segmentation: Change‑point detection and dynamic time warping identify phase boundaries (backswing, transition, downswing) for phase‑specific feature extraction.

These techniques should be applied iteratively: unsupervised structure informs label design for supervised learning, while domain‑informed constraints (biomechanical plausibility) guard against physiologically spurious clusters.

Supervised predictive modeling converts labeled movement classes and engineered features into performance forecasts and prescriptive rules. the table below summarizes representative model families and concise deployment notes for on‑range analytics and coaching applications.

| Model Family | Best Use Case | practical Note |

|---|---|---|

| Random Forest / Gradient Boosting | Robust prediction of shot outcome from heterogeneous features | Resistant to overfitting; use feature importance for insight |

| SVM / Logistic Regression | Binary classification of swing faults | Prefer when interpretability and small samples matter |

| Recurrent / Temporal CNNs | Real‑time sequence prediction and event detection | Require larger labeled datasets; enable phase‑aware feedback |

Practical prescriptions for operationalizing these frameworks emphasize data integrity, interpretability, and real‑world constraints. key recommendations:

- Standardize capture: synchronized motion‑capture and IMU sampling with consistent marker/segment conventions to reduce feature noise.

- Cross‑validation rigor: use nested CV and athlete‑level folds to avoid optimistic bias from repeated measures.

- Explainability: prefer models or post‑hoc tools (SHAP, partial dependence) that link features to biomechanical mechanisms for coach adoption.

- Latency and deployment: balance model complexity with inference time for live biofeedback; maintain model monitoring and retraining pipelines as more swing data accrue.

Following these prescriptions ensures that statistical discovery and machine learning prediction converge to produce interpretable, deployable, and athlete‑centered interventions.

Biomechanical constraint Identification and Personalized Intervention Design: Assessing Mobility, Stability, and Motor Control for Targeted Training

A systematic framework begins with delineating the athlete’s primary mechanical limitations through convergent data streams: kinematic profiling, kinetic sequencing, neuromuscular activation patterns, and clinical screens. Objective metrics-range of motion (ROM), joint angular velocity, ground reaction forces, and electromyographic (EMG) onset timing-are integrated with qualitative video analysis to classify constraints as predominantly **mobility**, **stability**, or **motor control** driven. This multimodal approach reduces diagnostic ambiguity and permits prioritized intervention planning tied to specific swing phases (backswing, transition, downswing, follow‑through).

Recommended assessment batteries combine sport‑specific and clinical tests to isolate impairments. Typical components include:

- Thoracic rotation ROM (seated and standing)

- hip internal/external rotation (prone and supine)

- Lumbar flexion/rotation tolerance (active functional reach)

- Single‑leg balance and perturbation responses (time-to-stabilize)

- Anti‑rotation core strength (Pallof press metrics)

- 3D motion capture of swing (segmental timing, X‑factor, kinematic sequence)

- Surface EMG timing of trunk and lower-limb musculature

These tests are interpreted within the athlete’s injury history and performance goals to determine the primary constraint(s) affecting swing efficiency and safety.

Intervention plans are individualized and triaged according to the dominant limitation. The table below summarizes exemplar pairings of constraint, targeted intervention, and anticipated mechanical change. Use objective re‑testing every 4-8 weeks to track adaptation and adjust programming.

| Constraint | Targeted intervention | Expected Mechanical Effect |

|---|---|---|

| Thoracic hypomobility | Thoracic mobility + dynamic rotation drills | Increased upper‑torso rotation, improved sequencing |

| Hip internal rotation deficit | Joint‑specific ROM + load‑bearing control | More stable pelvis, preserved lumbar spine |

| Poor motor sequencing | EMG‑guided neuromuscular training, tempo drills | Earlier gluteal activation, refined energy transfer |

Progression emphasizes transfer to task: begin with isolated remediation (mobility/stability drills), advance to integrated motor patterns under progressive load and velocity, and finalize with on‑course variability exposure. Monitoring employs both performance (clubhead speed, ball launch) and health (pain, movement quality) metrics; use biofeedback (inertial sensors, real‑time EMG) to accelerate motor learning. Documented reductions in compensatory lumbar motion and improvements in kinematic sequencing are reliable indicators that interventions are producing desirable mechanical adaptations while mitigating injury risk.

Real Time Feedback Systems and Motor Learning Principles: Implementing Augmented Feedback to Accelerate Skill Acquisition and Consistency

Contemporary motor learning theory distinguishes between two principal forms of augmented feedback: Knowledge of results (KR) - numerical outcome facts (e.g., ball speed, carry distance) - and Knowledge of Performance (KP) - movement-specific information (e.g., clubhead path, pelvis rotation). Effective real‑time systems translate raw sensor streams into these feedback types with attention to timing and fidelity. Immediate KP can accelerate error detection but risks creating performer dependency; consequently, optimal practice designs manipulate feedback frequency and delay to support internal model formation while preserving adaptability. Empirical principles such as bandwidth feedback and progressive fading schedules remain central: deliver precise KP only when deviations exceed a task-relevant threshold, and reduce frequency as performance stabilizes to promote retention and transfer.

Hardware and software choices shape what feedback is feasible and how it is interpreted. Low‑latency inertial measurement units (IMUs), optical motion capture, radar/laser launch monitors, and force/pressure platforms each offer tradeoffs among spatial resolution, temporal latency, and ecological validity. the table below summarizes typical modalities and their pragmatic characteristics for coaching contexts:

| Modality | Primary Signal | Typical Latency | Primary Learning Effect |

|---|---|---|---|

| IMUs (wearables) | Angular kinematics | 10-50 ms | KP for sequencing |

| Optical motion capture | 3D marker trajectories | 20-100 ms | High-fidelity technique analysis |

| Launch monitors | Ball/club impact metrics | <50 ms | KR for outcome control |

| Pressure/force plates | Ground reaction profiles | <50 ms | KP for weight transfer |

Translating system capabilities into practice requires intentional instructional design. Recommended implementation tactics include:

- Thresholded alerts: only notify when key metrics exceed defined error bounds to reduce information overload;

- Faded Frequency: start with high feedback density during acquisition and gradually reduce to promote consolidation;

- External focus Cues: pair KP with external, outcome-oriented instructions (e.g.,target-line acceleration) to exploit attentional advantages;

- intermittent Summary KR: provide aggregated outcome statistics after blocks of repetitions to support error detection without micro-managing movement.

Note that the supplied web search results for this request contained general real‑estate listings rather than domain literature; therefore these implementation recommendations are synthesized from established motor learning and human factors research rather than from the provided result set.

Evaluation metrics should move beyond single‑shot improvement and quantify stability, adaptability, and transfer. Use retention tests (no augmented feedback) at delayed intervals and transfer tasks under varied environmental constraints to assess robustness. Key quantitative indicators include within‑subject standard deviation of launch direction, trial‑to‑trial variability in clubhead path, and success rates on perturbed tasks; monitor these across progressive feedback schedules. Algorithmically, adaptive feedback that modulates threshold sensitivity based on recent variability and employs intermittent reinforcement (reward signals for small improvements) tends to accelerate consolidation while minimizing dependency. maintain a coaching log that correlates feedback parameters with retention outcomes so system tuning becomes evidence‑based rather than purely intuitive.

Validation,Reliability,and Translational implementation: Ensuring Robust Metrics,Reporting Standards,and Coach oriented Recommendations

Robust analytical systems require rigorous **validation strategies** that link laboratory-derived mechanics to on-course performance. Given golf’s inherent variability in playing surfaces and environmental conditions (see general sport descriptions in public references),validation must move beyond isolated lab trials to include field-based cross-validation across multiple courses and shot types. Validation protocols should specify criterion measures (e.g., 3D motion capture, force plate ground reaction, ball-tracking radar), accept prespecified error bounds, and document contexts of use so that reported metrics are interpretable by scientists and practitioners alike.

reliability assessment must be explicit, quantitative, and reproducible: inter- and intra-rater consistency, device-to-device agreement, and within-subject test-retest stability form the backbone of dependable metrics. Standard statistical outputs should include **intraclass correlation coefficients (ICC)**, **standard error of measurement (SEM)**, coefficient of variation (CV), and Bland-Altman limits of agreement.Recommended reporting items for every study or system include:

- Population descriptors (skill level, age, injury status);

- Measurement protocol (sampling rates, marker models, calibration routines);

- Reliability statistics (ICC, SEM, CV) with cis; and

- Environmental context (indoor lab vs. outdoor fairway,wind conditions).

Translational implementation focuses on actionable outputs that coaches can use in real time. The following compact table maps commonly reported biomechanical metrics to pragmatic coach-facing thresholds and suggested feedback modalities, enabling rapid translation from numbers to drills or cues. Use routine calibration and validation checks before deploying thresholds in a coaching workflow.

| Metric | Coach Threshold | suggested Feedback |

|---|---|---|

| Peak clubhead speed | ±0.5 m/s vs baseline | Max-effort swing tempo drill |

| Pelvic rotation velocity | Within 10% of skilled norm | Targeted rotational power exercise |

| Ground reaction symmetry | CV < 8% | Weight-shift cueing & balance drills |

For practical adoption, establish **standardized reporting templates**, routine device cross-calibration, and coach education modules that interpret uncertainty and error bounds rather than presenting point estimates alone. Implementation plans should prioritize minimal cognitive load for coaches-visual dashboards that flag deviations beyond SEM thresholds, succinct drill prescriptions tied to specific metric failures, and periodic system audits. transparency (open methods, example datasets) and periodic reassessment ensure that metrics remain robust as technology and playing conditions evolve.

Q&A

Note on sources: the web search results supplied with the query concern the journal ”Analytical chemistry” and are not directly related to golf biomechanics. Below I therefore provide an autonomous, evidence‑oriented Q&A tailored to the article topic “Analytical Frameworks for Golf Swing Mechanics.” the Q&A is written in an academic and professional register and is intended to map key concepts,methods,metrics,limitations,and translational implications for researchers and advanced practitioners.

Q1. What is meant by an “analytical framework” for golf swing mechanics?

A1. An analytical framework is a structured, reproducible set of concepts, measurement modalities, data‑processing procedures, and statistical/modeling approaches used to characterize, quantify, and interpret the biomechanical and neuromuscular determinants of the golf swing. It integrates kinematic description (motion), kinetic analysis (forces and moments), and neuromuscular assessment (EMG, timing, coordination) to support hypothesis testing, intervention design, and performance optimization.

Q2. What are the core components of a robust framework?

A2. Core components include: (1) clear operational definitions of swing phases and events (address, takeaway, backswing top, transition, down‑swing, impact, follow‑through); (2) standardized measurement protocols (marker sets, sensor placement, sampling rates); (3) kinematic variables (segment angles, angular velocities, sequence/timing); (4) kinetic variables (ground reaction forces, joint moments, club‑head kinetics); (5) neuromuscular variables (EMG amplitude, timing, muscle synergies); (6) rigorous data processing (filtering, gap filling, coordinate transformation); and (7) statistical/modeling techniques (inverse dynamics, musculoskeletal simulation, multivariate statistics, machine learning).

Q3. Which measurement technologies should be integrated?

A3. A multimodal approach is recommended: optical motion capture (high‑resolution kinematics), inertial measurement units (IMUs) for field validation, force plates/wireless force sensors for ground reaction forces, instrumented clubs or launch monitors for club kinetics and ball flight, surface EMG for muscle activation, and high‑speed videography for redundancy and qualitative checks. For detailed joint loading or muscle force estimation,combine motion capture with musculoskeletal modeling.

Q4. What sampling rates and filtering strategies are appropriate?

A4. Sampling rates should match the fastest event of interest: optical motion capture commonly 200-500 Hz for body segments; instrumented clubs and launch monitors may require >1000 Hz for impact; force plates and EMG are typically sampled at 1000-2000 Hz. Filtering should be justified by residual analysis; common practice uses low‑pass Butterworth filters with cut‑offs persistent per variable (e.g., 6-20 Hz for segment kinematics depending on movement frequency, higher for force and EMG processing). Document filtering choices and perform sensitivity analyses.

Q5. which kinematic metrics are most informative?

A5. Key metrics: club‑head speed at impact, clubface orientation at impact, swing plane and it’s variability, pelvis‑thorax separation (X‑factor), peak and time‑series angular velocities of pelvis, trunk, and shoulders, intersegmental sequencing (proximal‑to‑distal timing), and center of mass trajectories. Time‑normalized waveforms and event‑based peak/time measures both have value.

Q6. Which kinetic metrics should be prioritized?

A6. Priorities include peak and time‑series ground reaction force (vertical, sagittal, transverse), net joint moments (hip, lumbar, shoulder, elbow) via inverse dynamics, joint power and rate of joint power transfer, and impulse/momentum metrics related to ball speed. Normalization to body mass and stature is crucial for between‑subject comparisons.

Q7. How should neuromuscular factors be quantified?

A7. Surface EMG can quantify onset timing relative to swing events, peak and mean activation levels, and intermuscular coordination. Advanced approaches include muscle synergy analysis (nonnegative matrix factorization) and time‑frequency methods for activation dynamics. EMG normalization to maximal voluntary contraction (MVC) or task‑specific reference contractions should be applied and reported.

Q8. How can interindividual variability be handled analytically?

A8. Use mixed‑effects models to account for repeated measures and subject‑level random effects, cluster analysis or latent class models to identify movement phenotypes, principal component analysis (PCA) or functional PCA for dimensionality reduction of time‑series, and statistical parametric mapping (SPM) for waveform comparisons. Report effect sizes and confidence intervals to contextualize variability.

Q9. What modeling approaches are useful for causal inference?

A9.Inverse dynamics provides joint moments and powers but not muscle forces. Musculoskeletal simulation (e.g., OpenSim) with optimization algorithms can estimate muscle force patterns and internal loading.Forward dynamics can test causal changes by simulating modified inputs. Machine learning models can predict outcomes (e.g., ball speed) but require careful cross‑validation and interpretability techniques (e.g., SHAP values) for mechanistic insight.

Q10. How should sequencing and timing be quantified?

A10. Quantify relative timing of peak angular velocities (pelvis, trunk, lead arm, club), temporal lags between segment peaks (proximal‑to‑distal sequence), and coordination measures such as continuous relative phase. use time‑normalized profiles anchored to consistent events (e.g., impact) to compare across swings.

Q11. What are recommended protocols for ecological validity?

A11.Combine lab‑based high‑precision measurement with on‑course or simulator testing using IMUs and instrumented clubs.Use representative tasks (full shots with realistic ball‑flight demands) and consider fatigue and environmental variability.Report task constraints and provide transferability discussion.

Q12.How should injury risk be integrated into the framework?

A12. Include metrics linked to injury mechanisms: peak lumbar extension and rotation, torsional spine moments, cumulative loading cycles, and asymmetries in muscle activation or ground reaction forces. Use musculoskeletal models to estimate internal spinal loading and correlate with clinical indicators. Longitudinal designs are best for associating mechanics with injury incidence.

Q13. What statistical concerns are most important?

A13. Predefine primary outcomes and analysis plans to avoid multiple comparisons bias; apply correction methods when necessary. Use appropriate models for repeated measures and nested data. Test reliability (intra‑ and inter‑session iccs) and minimal detectable change (MDC). For machine learning, ensure train/validation/test splits with participant‑level separation.

Q14. How should data be normalized and reported?

A14. Normalize kinetic measures to body mass (N/kg), moments to body mass × height, and velocities to consistent units. Report sampling rates, filter types/cutoffs, marker sets, coordinate system definitions, event detection rules, and all preprocessing steps. Provide code or data when possible to enhance reproducibility.

Q15. What are common methodological limitations and how can they be mitigated?

A15. Limitations include skin‑motion artifact for marker‑based kinematics, marker occlusion, limited sampling frequency for impact events, ecological differences between lab and course, and EMG cross‑talk. Mitigate by using cluster marker sets, redundant sensors, higher sampling rates for impact, combining optical capture with IMUs, and rigorous EMG electrode placement plus normalization. Conduct sensitivity analyses to quantify the impact of processing choices.

Q16. How can the framework guide coaching and intervention design?

A16. Translate mechanistic findings into targeted interventions: sequencing deficits → coordination drills and tempo training; insufficient force production → strength and power programs; timing inconsistencies → biofeedback (auditory/visual) and motor learning methods; fault patterns → constraint‑led task modifications. Prioritize interventions that are mechanistically linked to the performance metric of interest (e.g., club‑head speed, accuracy, consistency).

Q17. What role do machine learning and data‑driven methods play?

A17. Machine learning can classify swing patterns, predict outcomes (ball speed, dispersion), and uncover complex multivariate relationships. Use interpretable approaches (e.g., regularized regression, tree‑based models with SHAP) to avoid black‑box outputs. Ensure models are trained on sufficiently large, diverse datasets and validated externally.

Q18. What are recommended directions for future research?

A18.Priorities include: integration of wearable sensor data for large‑scale, ecologically valid datasets; development of individualized musculoskeletal models; longitudinal studies linking mechanics to performance trajectory and injury; real‑time biofeedback systems based on validated models; and standardized reporting and data‑sharing frameworks for replication and meta‑analysis.

Q19. How should researchers ensure ethical and practical considerations?

A19. Obtain informed consent, ensure safe testing protocols (warm‑up, fatigue monitoring), and anonymize datasets. Consider participant time and burden-balance measurement comprehensiveness with feasibility. For commercial collaborations (e.g., with launch‑monitor companies), disclose conflicts of interest.

Q20. What practical checklist should researchers use when designing a study based on this framework?

A20. Checklist:

- Define study aims and primary outcomes a priori.

– Specify swing phase/event definitions and marker/sensor sets.- Choose sampling rates appropriate to the fastest event.

– Predefine signal processing pipeline (filtering, normalization).- include reliability testing and sample size justification.

– Select statistical/modeling approaches appropriate to data structure.

– plan ecological validation or field testing if translational goals exist.

– Pre-register analysis plan where possible and share data/code.

Closing remark: This Q&A summarizes best practices and analytical considerations for constructing and applying an integrated framework to study modern golf swing mechanics. For implementation, researchers should align measurement selections and modeling complexity to their specific research questions (e.g., performance prediction vs. internal loading estimation) and report methods fully to support replication and applied translation.

Note on sources: the provided search results related primarily to analytical chemistry and process monitoring and did not directly address golf-swing research. Where applicable, conceptual parallels from those fields-particularly the emphasis on rigorous measurement, standardization, and validation-have informed the closing synthesis below rather than serving as direct domain references.

Conclusion

This review has outlined an integrative analytical framework for optimizing golf-swing mechanics by synthesizing biomechanical modeling, motion-capture analytics, and data-driven feedback loops. Central to this framework is the iterative coupling of high-fidelity measurement with mechanistic models: precise kinematic and kinetic data acquired through multi-modal sensing enable the parameterization and validation of musculoskeletal and rigid‑body models, while model outputs guide targeted interventions that are evaluated empirically. Machine‑learning techniques complement this pipeline by identifying latent patterns in large datasets, facilitating individualized performance profiling and predictive assessment of technique changes.

Several cross-cutting themes emerge. First, measurement validity and repeatability are foundational; without standardized protocols for sensor placement, calibration, and data preprocessing, downstream modeling and inference are compromised. Second,the integration of deterministic biomechanical models with probabilistic,data-driven methods yields more robust and interpretable recommendations than either approach alone. Third, translational pathways-from laboratory insights to on-course behaviour-require attention to ecological validity, athlete-specific constraints, and coach-athlete communication to ensure sustained performance gains.

Looking forward, priority research directions include: longitudinal studies that assess the durability of technique adaptations informed by analytical feedback; development of real‑time, low-latency platforms for in-situ coaching; deeper exploration of inter-individual variability in motor control strategies; and the creation of open, standardized datasets to accelerate comparative research. Ethical and practical considerations-data privacy, equitable access to advanced analytics, and the risk of over-reliance on automated recommendations-must also be addressed through interdisciplinary collaboration and stakeholder engagement.

In sum, advancing golf-swing optimization demands rigorous measurement, principled modeling, and careful translation into practice. By adhering to standards of reproducibility and integrating mechanistic understanding with scalable data analytics, researchers and practitioners can enhance efficiency, power, and accuracy in a manner that is both scientifically defensible and practically meaningful.

Analytical Frameworks for Golf Swing Mechanics

What an analytical framework is and why it matters for your golf swing

An analytical framework for golf swing mechanics is a structured, repeatable process that transforms raw movement and ball-flight data into actionable coaching feedback. by combining biomechanics, motion-capture analytics, force measurements and launch monitor outputs into a single workflow, coaches and players can improve clubhead speed, ball flight, accuracy and consistency in a measurable way.

Core building blocks of a golf-swing analysis framework

- Biomechanical modeling: Joint angles, segment velocities, kinematic sequence and hip-shoulder separation.

- Motion-capture analytics: Optical systems or IMUs to quantify swing plane, tempo, transition and impact events.

- Launch monitor integration: Clubhead speed, smash factor, spin rate, launch angle, carry and total distance (TrackMan, FlightScope style metrics).

- Ground reaction and force data: Force plates to measure weight shift, ground reaction force (GRF), lateral force and vertical impulse.

- Data fusion & analytics: Signal processing, event detection, machine learning models and visualization dashboards for coaching decisions.

Step-by-step framework to evaluate and optimize swing mechanics

1. Define objectives and KPIs

Start with clear goals. Examples of KPIs for a player:

- Clubhead speed (mph / kph)

- Ball speed and smash factor

- Spin rate and launch angle for chosen club

- Kinematic sequence (timing of pelvis → thorax → arms → club)

- Ground reaction force symmetry and peak force

- consistency metrics: standard deviation of club path and face angle at impact

2. Data collection protocol

A robust data collection plan reduces noise and increases repeatability:

- Calibrate motion-capture systems and launch monitors before each session.

- Use consistent ball, tee height and target lines. Record environmental conditions.

- Capture multiple swings per condition (minimum 8-12 swings for statistical confidence).

- Record both club and ball data: marker/IMU kinematics + launch monitor flight metrics.

3. Model selection & metric extraction

Choose models and metrics relevant to the goal (distance, accuracy, injury prevention). Typical outputs include:

- Joint angles and angular velocities (hip, shoulder, elbow, wrist)

- Clubhead speed and path, face angle at impact

- Timing of peak segment velocities (kinematic sequence)

- Weight transfer curve and peak GRF

4. Signal processing & event detection

Apply filters and automated event detection to extract consistent metrics: low-pass Butterworth filters, peak detectors for max clubhead speed, and algorithms to detect impact frame. Validate algorithms visually for a subset of swings.

5. Analytics & interpretation

Use descriptive statistics, trend charts and correlation analyses. Examples:

- Correlate pelvis rotation velocity to clubhead speed.

- Analyze how early release affects smash factor and spin rate.

- Segment players by swing type and develop targeted drills.

Tools & technologies commonly used

| Tool | Primary use | quick tip |

|---|---|---|

| optical Motion Capture | High-precision kinematic data | best for lab-based biomechanical models |

| IMUs (wearables) | Field-friendly swing metrics | Good for on-course tracking and consistency checks |

| Launch Monitors | ball & club-flight metrics | Essential for distance and spin analysis |

| Force Plates | Ground reaction & weight shift | Helps quantify power generation from the ground up |

Data fusion: combining motion capture, force and ball-flight data

The real value is in cross-referencing systems: align motion-capture frames with launch monitor timestamps and GRF peaks to create a single time-aligned dataset. This allows statements like “peak pelvic rotation precedes max clubhead speed by X ms” or “an early lateral force peak correlates with increased side spin.”

Implementing feedback loops for learning and adaptation

Feedback should be tailored by the timeframe and the learning objective:

- Real-time audio/visual feedback: Use simple metrics (clubhead speed, face angle) to immediate correct gross errors during practice.

- Post-session dashboards: Provide annotated swing traces, kinematic sequence charts and annotated video overlays for deeper learning.

- Progress monitoring: Weekly or monthly trend reports focusing on KPIs and variance reduction.

practical coaching workflow

- baseline assessment: record swings with standard driver and 7-iron.

- Identify 2-3 leverage points (e.g., pelvis timing, wrist hinge, face control).

- Design drills linked to those metrics and set measurable targets.

- Implement drills with immediate feedback tools (mirrors, sensors, video overlays).

- Reassess after 2-4 weeks and iterate.

Benefits and practical tips

- Data-driven coaching reduces guesswork and accelerates measurable improvement in clubhead speed and accuracy.

- Focus on consistency metrics (standard deviation) as much as peak performance numbers.

- Use lightweight IMUs on the glove or shaft for on-course practice; reserve optical systems for periodic lab-level deep dives.

- Prioritize a single, high-impact change at a time - don’t overload the student with multiple mechanical fixes simultaneously.

Case studies: short applied examples

Case study A – Amateur golfer seeking distance

Baseline: 90 mph clubhead speed,inconsistent launch angle,moderate spin. tools used: IMU on lead wrist, launch monitor, video.

- Finding: poor pelvis-thorax separation and early arm release.

- Intervention: rotational power drills and wrist-cocking timing cue, plus a tempo metronome.

- Result: +6-7 mph clubhead speed over 6 weeks and straighter ball flight; improved smash factor.

Case study B – Tour-level pre-event tuning in a lab

Baseline: Pro player with excellent speed but slight right miss. Tools used: optical motion capture, force plates, high-end launch monitor.

- Finding: slight late lateral GRF causing out-to-in path and closed face at impact.

- Intervention: force-plate training to alter weight shift timing and a face-control impact drill.

- result: reduction in lateral dispersion and improved scoring consistency during event week.

Common pitfalls and troubleshooting

- Poor synchronization: Unsynced systems lead to misleading correlations. Always cross-check timestamps.

- Overfitting to lab conditions: Fixes that work in the lab may fail on course – validate on-range and on-course.

- Ignoring variability: A certain variability is natural; aim to reduce harmful variance, not eliminate natural adaptability.

- Too many metrics: Track priority KPIs. More data is not always better unless it maps to a coaching decision.

Actionable training drills mapped to measurable metrics

- Tempo metronome drill (Tempo): Use audio metronome to normalize backswing-to-downswing ratio – track timing of peak clubhead speed.

- Separation band drill (Hip-shoulder separation): Resistance-band rotations to reinforce a delayed shoulder rotation – measure increased X-factor at top.

- Step-through power drill (GRF & sequencing): Step-forward drill emphasizing lateral force into the ball – measure peak vertical and lateral GRF increases.

- Impact bag & face awareness (face angle): Train consistent contact and face control – confirm with reduced standard deviation of face angle at impact.

Key performance indicators (KPIs): simple table to track progress

| Metric | Why it matters | Sample target |

|---|---|---|

| Clubhead speed | Primary driver of distance | +5 mph over baseline |

| Smash factor | Efficiency of energy transfer | Improve by 0.03-0.05 |

| Std dev of face angle | consistency in direction | Reduce by 20%-30% |

| Pelvis → Thorax timing (ms) | Kinematic sequencing for power | Optimal window depends on player; track trend |

Measuring progress and reporting

Keep weekly or monthly scorecards with baseline vs current values and a short coaching note about the next training focus. Visual trendlines (moving average, confidence intervals) help players see progress and reinforce behavioral changes.

First-hand coaching recommendations

From working with players across skill levels, the most effective analytics frameworks:

- Start with one technology you can use consistently (an IMU or a mid-range launch monitor).

- Set three measurable goals per training block (speed,consistency,and a movement quality).

- Use video overlays with key kinematic markers – players internalize changes faster when they see the mechanical cause-and-effect.

- Don’t chase every metric; target the ones that move the needle for the player’s objective (distance, accuracy, or durability).

Next steps and resources

- Plan a baseline assessment session with a combined launch monitor + kinematic capture.

- Keep a simple dashboard (spreadsheet or dashboard tool) tracking KPIs and practice drills.

- Consider periodic lab-level testing to recalibrate models and validate on-course transfer.